Time Domain Filter Estimation

System identification is the subject of identifying filter coefficients given measurements of the input and output signals [46,78]. For example, one application is amplifier modeling, in which we measure (1) the normal output of an electric guitar (provided by the pick-ups), and (2) the output of a microphone placed in front of the amplifier we wish to model. The guitar may be played in a variety of ways to create a collection of input/output data to use in identifying a model of the amplifier's ``sound.'' There are many commercial products which offer ``virtual amplifier'' presets developed partly in such a way.F.6 One can similarly model electric guitars themselves by measuring the pick signal delivered to the string (as the input) and the normal pick-up-mix output signal. A separate identification is needed for each switch and tone-control position. After identifying a sampling of models, ways can be found to interpolate among the sampled settings, thereby providing ``virtual'' tone-control knobs that respond like the real ones [101].

In the notation of the §F.1, assume we know

![]() and

and

![]() and wish to solve for the filter impulse response

and wish to solve for the filter impulse response

![]() . We now outline a simple yet

practical method for doing this, which follows readily from the

discussion of the previous section.

. We now outline a simple yet

practical method for doing this, which follows readily from the

discussion of the previous section.

Recall that convolution is commutative. In terms of the matrix representation of §F.3, this implies that the input signal and the filter can switch places to give

![$\displaystyle \underbrace{%

\left[\begin{array}{c}

y_0 \\ [2pt] y_1 \\ [2pt] y...

... h_2 \\ [2pt] h_3\\ [2pt] h_4\end{array}\right]}_{\displaystyle\underline{h}},

$](http://www.dsprelated.com/josimages_new/filters/img2031.png)

Here we have indicated the general case for a length

The Moore-Penrose pseudoinverse is easy to

derive.F.7 First multiply Eq.![]() (F.7) on the left by the

transpose of

(F.7) on the left by the

transpose of

![]() in order to obtain a ``square'' system of

equations:

in order to obtain a ``square'' system of

equations:

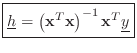

Thus,

If the input signal ![]() is an impulse

is an impulse ![]() (a 1 at time

zero and 0 at all other times), then

(a 1 at time

zero and 0 at all other times), then

![]() is simply the identity

matrix, which is its own inverse, and we obtain

is simply the identity

matrix, which is its own inverse, and we obtain

![]() . We expect

this by definition of the impulse response. More generally,

. We expect

this by definition of the impulse response. More generally,

![]() is invertible whenever the input signal is ``sufficiently

exciting'' at all frequencies. An LTI filter frequency response can

be identified only at frequencies that are excited by the input, and

the accuracy of the estimate at any given frequency can be improved by

increasing the input signal power at that frequency.

[46].

is invertible whenever the input signal is ``sufficiently

exciting'' at all frequencies. An LTI filter frequency response can

be identified only at frequencies that are excited by the input, and

the accuracy of the estimate at any given frequency can be improved by

increasing the input signal power at that frequency.

[46].

Effect of Measurement Noise

In practice, measurements are never perfect. Let

![]() denote the measured output signal, where

denote the measured output signal, where

![]() is a vector of

``measurement noise'' samples. Then we have

is a vector of

``measurement noise'' samples. Then we have

It is also straightforward to introduce a weighting function in

the least-squares estimate for

![]() by replacing

by replacing

![]() in the

derivations above by

in the

derivations above by

![]() , where

, where ![]() is any positive definite

matrix (often taken to be diagonal and positive). In the present

time-domain formulation, it is difficult to choose a

weighting function that corresponds well to audio perception.

Therefore, in audio applications, frequency-domain formulations are

generally more powerful for linear-time-invariant system

identification. A practical example is the frequency-domain

equation-error method described in §I.4.4 [78].

is any positive definite

matrix (often taken to be diagonal and positive). In the present

time-domain formulation, it is difficult to choose a

weighting function that corresponds well to audio perception.

Therefore, in audio applications, frequency-domain formulations are

generally more powerful for linear-time-invariant system

identification. A practical example is the frequency-domain

equation-error method described in §I.4.4 [78].

Matlab System Identification Example

The Octave output for the following small matlab example is listed in Fig.F.1:

delete('sid.log'); diary('sid.log'); % Log session

echo('on'); % Show commands as well as responses

N = 4; % Input signal length

%x = rand(N,1) % Random input signal - snapshot:

x = [0.056961, 0.081938, 0.063272, 0.672761]'

h = [1 2 3]'; % FIR filter

y = filter(h,1,x) % Filter output

xb = toeplitz(x,[x(1),zeros(1,N-1)]) % Input matrix

hhat = inv(xb' * xb) * xb' * y % Least squares estimate

% hhat = pinv(xb) * y % Numerically robust pseudoinverse

hhat2 = xb\y % Numerically superior (and faster) estimate

diary('off'); % Close log file

One fine point is the use of the syntax ``

+ echo('on'); % Show commands as well as responses

+ N = 4; % Input signal length

+ %x = rand(N,1) % Random input signal - snapshot:

+ x = [0.056961, 0.081938, 0.063272, 0.672761]'

x =

0.056961

0.081938

0.063272

0.672761

+ h = [1 2 3]'; % FIR filter

+ y = filter(h,1,x) % Filter output

y =

0.056961

0.195860

0.398031

1.045119

+ xb = toeplitz(x,[x(1),zeros(1,N-1)]) % Input matrix

xb =

0.05696 0.00000 0.00000 0.00000

0.08194 0.05696 0.00000 0.00000

0.06327 0.08194 0.05696 0.00000

0.67276 0.06327 0.08194 0.05696

+ hhat = inv(xb' * xb) * xb' * y % Least squares estimate

hhat =

1.0000

2.0000

3.0000

3.7060e-13

+ % hhat = pinv(xb) * y % Numerically robust pseudoinverse

+ hhat2 = xb\y % Numerically superior (and faster) estimate

hhat2 =

1.0000

2.0000

3.0000

3.6492e-16

|

Next Section:

Markov Parameters

Previous Section:

State Space Realization