Hi All,

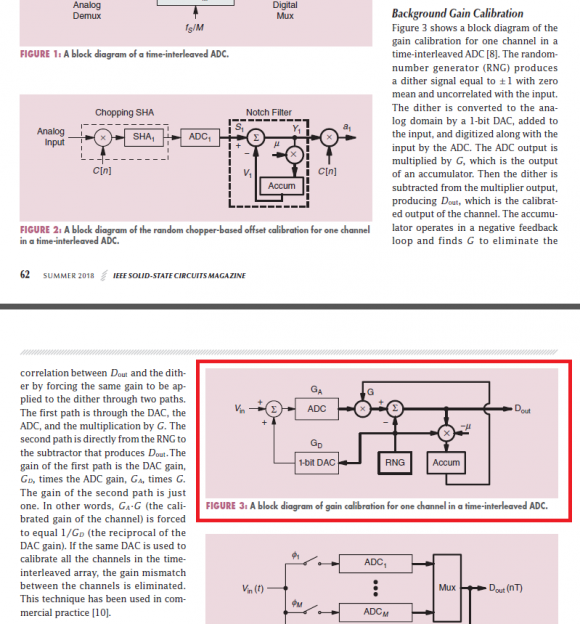

I'm a DSP newbie trying to get myself more familiar with convergence algorithms and DSP in general. I've come across an article on gain calibration for time-interleaved ADCs (article name: calibration and dynamic matching in data converters). Particularly, I'd like to understand how I can mathematically analyze the block diagram in the picture below (Figure 3). I can simulate it in Simulink and see that it is doing what it's supposed to be doing but I am not sure how I can prove G = 1/(GD*GA) analytically. Also, the multiplication between the dither signal and Dout followed by an accumulator to produce G is a little bit beyond my understanding.

Also, please feel free to point me towards any books/materials. My end goal is to become comfortable and develop a strong intuition and understanding of systems like these. Any help/guidance is greatly appreciated!

Best Regards,

Kaung