Round-Off Error Variance

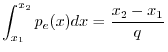

This appendix shows how to derive that the noise power of amplitude

quantization error is ![]() , where

, where ![]() is the quantization step

size. This is an example of a topic in statistical signal

processing, which is beyond the scope of this book. (Some good

textbooks in this area include

[27,51,34,33,65,32].)

However, since the main result is so useful in practice, it is derived

below anyway, with needed definitions given along the way. The

interested reader is encouraged to explore one or more of the

above-cited references in statistical signal processing.G.10

is the quantization step

size. This is an example of a topic in statistical signal

processing, which is beyond the scope of this book. (Some good

textbooks in this area include

[27,51,34,33,65,32].)

However, since the main result is so useful in practice, it is derived

below anyway, with needed definitions given along the way. The

interested reader is encouraged to explore one or more of the

above-cited references in statistical signal processing.G.10

Each round-off error in quantization noise ![]() is modeled as a

uniform random variable between

is modeled as a

uniform random variable between ![]() and

and ![]() . It therefore

has the following probability density function (pdf) [51]:G.11

. It therefore

has the following probability density function (pdf) [51]:G.11

![$\displaystyle p_e(x) = \left\{\begin{array}{ll}

\frac{1}{q}, & \left\vert x\ri...

...2} \\ [5pt]

0, & \left\vert x\right\vert>\frac{q}{2} \\

\end{array} \right.

$](http://www.dsprelated.com/josimages_new/mdft/img2027.png)

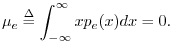

The mean of a random variable is defined as

The mean of a signal ![]() is the same thing as the

expected value of

is the same thing as the

expected value of ![]() , which we write as

, which we write as

![]() .

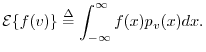

In general, the expected value of any function

.

In general, the expected value of any function ![]() of a

random variable

of a

random variable ![]() is given by

is given by

Since the quantization-noise signal ![]() is modeled as a series of

independent, identically distributed (iid) random variables, we can

estimate the mean by averaging the signal over time.

Such an estimate is called a sample mean.

is modeled as a series of

independent, identically distributed (iid) random variables, we can

estimate the mean by averaging the signal over time.

Such an estimate is called a sample mean.

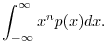

Probability distributions are often characterized by their

moments.

The ![]() th moment of the pdf

th moment of the pdf ![]() is defined as

is defined as

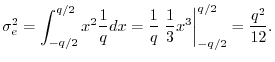

The

variance

of a random variable ![]() is defined as

the

second central moment of the pdf:

is defined as

the

second central moment of the pdf:

![$\displaystyle \sigma_e^2 \isdef {\cal E}\{[e(n)-\mu_e]^2\}

= \int_{-\infty}^{\infty} (x-\mu_e)^2 p_e(x) dx

$](http://www.dsprelated.com/josimages_new/mdft/img2042.png)

Next Section:

Matrix Multiplication

Previous Section:

Logarithmic Number Systems for Audio