Gaussian Distribution

The Gaussian distribution has maximum entropy relative to all

probability distributions covering the entire real line

![]() but having a finite mean

but having a finite mean ![]() and finite

variance

and finite

variance ![]() .

.

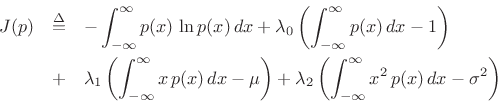

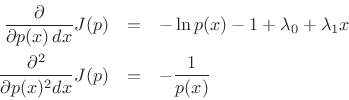

Proceeding as before, we obtain the objective function

and partial derivatives

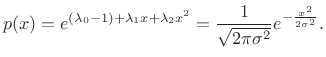

leading to

|

(D.41) |

For more on entropy and maximum-entropy distributions, see [48].

Next Section:

Wavetable Synthesis

Previous Section:

Exponential Distribution