Gaussian Mean

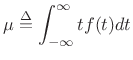

The mean of a distribution ![]() is defined as its

first-order moment:

is defined as its

first-order moment:

|

(D.42) |

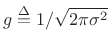

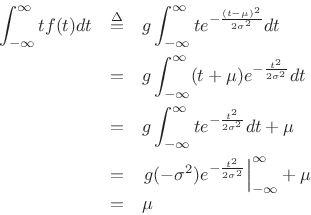

To show that the mean of the Gaussian distribution is ![]() , we may write,

letting

, we may write,

letting

,

,

since

![]() .

.

Next Section:

Gaussian Variance

Previous Section:

Maximum Entropy Distributions