Minimum Shift Keying (MSK) - A Tutorial

Minimum Shift Keying (MSK) is one of the most spectrally efficient modulation schemes available. Due to its constant envelope, it is resilient to non-linear distortion and was therefore chosen as the modulation technique for the GSM cell phone standard.

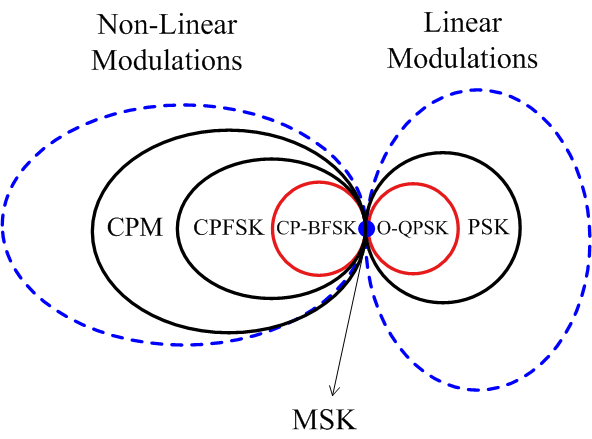

MSK is a special case of Continuous-Phase Frequency Shift Keying (CPFSK) which is a special case of a general class of modulation schemes known as Continuous-Phase Modulation (CPM). It is worth noting that CPM (and hence CPFSK) is a non-linear modulation and hence by extension MSK is a non-linear modulation as well. Nevertheless, it can also be cast as a linear modulation scheme, namely Offset Quadrature Phase Shift Keying (OQPSK), which is a special case of Phase Shift Keying (PSK). As a borderline case, these relationships are illustrated in Figure 1 below.

Figure 1: MSK as a special case of both non-linear and linear modulation schemes

At this point, you would be thinking about the following question: How can a modulation be both non-linear and linear? As we see later in this tutorial, originally MSK is a non-linear modulation but a certain depiction of its actual digital symbols known as pseudo-symbols turns it into an OQPSK representation.

Modulation is a simple topic to understand but owing to the above description, MSK can sometimes be an intimidating concept. Here, our purpose is to present it in an uncomplicated manner by building it through the fundamentals.

The starting point is one of the simplest digital modulations possible: FSK.

Binary Frequency Shift Keying (BFSK)

In Frequency Shift Keying (FSK), digital information is transmitted by changing the frequency of a carrier signal. Naturally, Binary FSK (BFSK) is the simplest form of FSK where the two bits 0 and 1 correspond to two distinct carrier frequencies $F_0$ and $F_1$ to be sent over the air. The bits can be translated into symbols through the relations

$$0 \quad \rightarrow \quad -1$$

$$1 \quad \rightarrow \quad +1$$

This enables us to write the frequencies $F_i$ with $i ~ \epsilon ~ \{0,1\}$ as$$F_i = F_c + (-1)^{i+1} \cdot \Delta_F = F_c \pm \Delta_F$$

where $F_c$ is the nominal carrier frequency and $\Delta_F$ is the peak frequency deviation from this carrier frequency. Consequently,

\begin{equation}s(t) = A \cos 2\pi F_i t = A \cos\Big[2\pi \big\{F_c \pm \Delta_F\big\} t\Big]\quad ---- \quad \text{Eq (1)}\end{equation}

where $$0\le t \le T_b$$

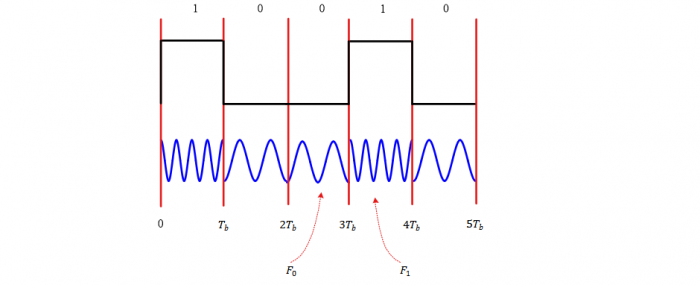

Figure 2 below displays a BFSK waveform for a random stream of data at a rate of $R_b = 1/T_b$. Note that we are not distinguishing between a bit period and a symbol period because both are the same for a binary modulation technique.

Figure 2: A Binary Frequency Shift Keying (BFSK) waveform

As is evident from Figure 2 above, the phase transitions at the boundaries of bit transitions are -- in general -- discontinuous.

Minimum Frequency Spacing

Although any two distinct frequencies $F_0$ and $F_1$ can be used for communication purpose, it greatly helps in receiver design if the two distinct signals are orthogonal to each other, i.e.,

$$\int \limits _0 ^{T_b} s_1(t) s_0(t) ~dt = 0$$

A question that arises at this stage is the following: how close can the two frequencies $F_0$ and $F_1$ be? Or in other words, what is the smallest possible value of $\Delta_F$? The reason for asking this question is spectral efficiency. The closer the two frequencies, the more the number of channels available for other users in the same spectrum.

For a BFSK case,

or

\begin{align*} \int \limits _0 ^{T_b} \cos 2 \pi (F_1 + F_0 )t ~ dt + \int \limits _0 ^{T_b} \cos 2 \pi (F_1 - F_0 )t ~ dt &= 0 \\ \frac{\sin 2\pi (F_1+F_0)t}{2\pi (F_1+F_0)}\bigg |_{0}^{T_b} + \frac{\sin 2\pi (F_1-F_0)t}{2\pi (F_1-F_0)}\bigg|_{0}^{T_b}&= 0 \\ \frac{\sin 2\pi (F_1+F_0)T_b}{2\pi (F_1+F_0)} + \frac{\sin 2\pi (F_1-F_0)T_b}{2\pi (F_1-F_0)}&= 0 \end{align*} Since $F_1+F_0 = 2F_c ≫ 1$ while $-1 \le \sin x \le 1$, the first term goes to zero and we can write \begin{equation*} \sin 2\pi (F_1-F_0) T_b = 0 \end{equation*} which is true for (remember $\sin k\pi = 0$ for integer $k$) $$2\pi (F_1-F_0) T_b = k\pi$$ From here, the orthogonality condition can be concluded as $$F_1-F_0 = \frac{k}{2T_b}$$ This also yields the minimum frequency separation for $k=1$. $$F_1-F_0 = \frac{1}{2T_b} = \frac{R_b}{2}$$ for orthogonal signaling. Thus, the peak frequency deviation $\Delta_F$ can be computed as $$\Delta_F = \frac{F_1-F_0}{2} = \frac{1}{4T_b} = \frac{R_b}{4}$$

MSK as Continuous-Phase FSK

From the above information, a BFSK signal with minimum tone spacing can be constructed by replacing $\Delta_F$ by $R_b/4$ in Eq (1) as

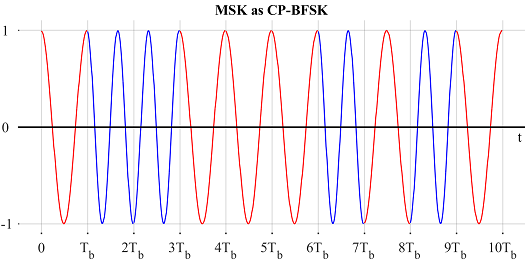

\begin{align*}s(t) &= A \cos \Big[2\pi \big\{F_c \pm \frac{R_b}{4}\big\} t\Big], \\ &= A \cos \Big[2\pi F_c t \pm 2\pi\frac{R_b}{4} t\Big], \quad 0\le t \le T_b \end{align*}This is a CP-BFSK signal with minimum tone spacing defined over a single bit interval $0 \le t \le T_b$. There are two more steps to construct an actual MSK waveform.

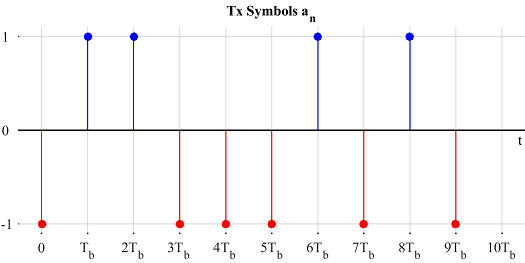

In a real communication system, the signal is constructed by transmitting a sequence of bits $b_n$ in succession, where $n$ is the bit index. As we have seen earlier, bits $b_n ~\epsilon~ \{0,1\}$ are converted to symbols $a_n$. $$a_n ~\epsilon~ \{-1,+1\}$$ Then, in the interval $nT_b \le t \le (n+1)T_b$, the above signal can be written as \begin{align} s(t) &= A \cos \Big[2\pi F_c t + 2\pi\frac{a_n R_b}{4} (t-nT_b)\Big], \quad nT_b \le t \le (n+1)T_b \end{align} where $n$ is the bit index within a long bit stream and the second term indicates the underlying baseband message.

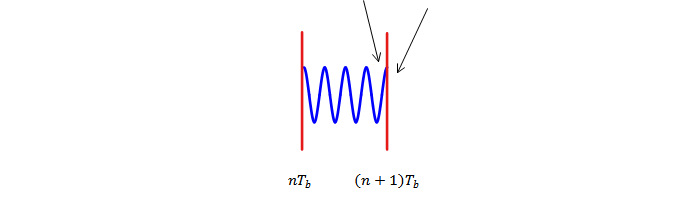

Observe in the above equation that the phase continuity is not necessarily maintained from one symbol to the next. To ensure phase continuity, we must add a phase component for each symbol as \begin{align*}s(t) &= A \cos \Big[2\pi F_c t + 2\pi\frac{a_n R_b}{4} (t-nT_b) + \theta_n\Big] \\ & \qquad \qquad nT_b \le t \le (n+1)T_b \quad ---- \quad \text{Eq (2)}\end{align*} For this purpose, the phase at both sides of $t=(n+1)T_b$ must be equal, as illustrated in Figure 3. Figure 3: Phase on both sides of $t=(n+1)T_b$ must be equal to maintain continuity

Figure 3: Phase on both sides of $t=(n+1)T_b$ must be equal to maintain continuity

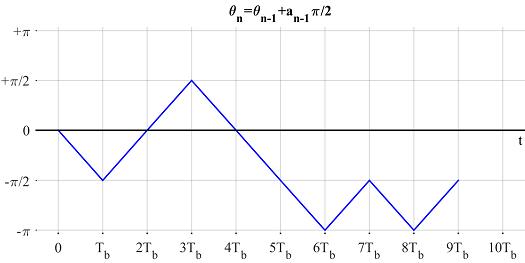

Thus, at the instant $t=(n+1)T_b$, the following equation must be satisfied. \begin{align*} 2\pi \frac{a_n R_b}{4} (t-nT_b) + \theta_n \bigg |_{t=(n+1)T_b} &= 2\pi \frac{a_{n+1} R_b}{4} (t-(n+1)T_b) + \theta_{n+1} \bigg |_{t=(n+1)T_b} \end{align*} which can be written as $$\theta_{n+1} = \theta_{n} + a_n \frac{\pi}{2}$$ Another way to write the above recursive relation is \begin{equation*}\boxed{\theta_{n} = \theta_{n-1} + a_{n-1} \frac{\pi}{2}}\quad ---- \quad \text{Eq (3)}\end{equation*} Assume that $\theta_0 = 0$. Then, $$\theta_1 = a_0 \frac{\pi}{2}$$ $$\theta_2 = \theta_1 + a_1 \frac{\pi}{2} = \frac{\pi}{2} (a_0+a_1)$$ In general, $$\theta_n = \frac{\pi}{2} \sum \limits_{i=0}^{n-1} a_i \quad ---- \quad \text{Eq (4)}$$

When the phase follows this rule during each bit/symbol interval, the phase continuity is ensured and the resulting waveform is shown for an example sequence in Figure 4.

Figure 4: MSK as Continuous-Phase Binary FSK

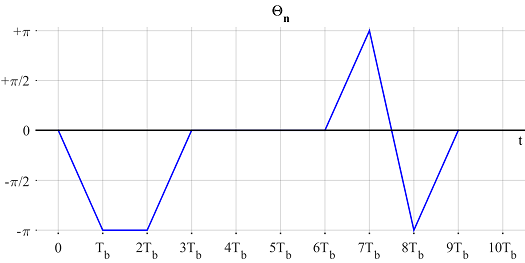

Figure 5: $\theta_n$ evolving with time

MSK as Offset QPSK

First, write Eq (2) assuming $A=1$ as \begin{align*}s(t) &= \cos \Big[2\pi F_c t + 2\pi \frac{a_n R_b}{4} (t-nT_b) + \theta_n\Big] \\ &= \cos \Big[2\pi F_c t + 2\pi \frac{a_n R_b}{4} t - \underbrace{na_n \frac{\pi}{2} + \theta_n}_{\Theta_n}\Big]\quad ---- \quad \text{Eq (5)} \end{align*} Using Eq (3), we can further manipulate $\Theta_n$ as \begin{align*} \Theta_n &= \theta_n - na_n \frac{\pi}{2} \\ &= \theta_{n-1} + a_{n-1}\frac{\pi}{2} - na_n \frac{\pi}{2} \\ &= \theta_{n-1} + a_{n-1}\Big(+1-n+n\Big)\frac{\pi}{2} - na_n \frac{\pi}{2} \\ &= \theta_{n-1} - (n-1)a_{n-1}\frac{\pi}{2} + n \frac{\pi}{2} \Big(a_{n-1} - a_n\Big)\\ &= \Theta_{n-1} + n \frac{\pi}{2} \Big(a_{n-1} - a_n\Big)\quad ---- \quad \text{Eq (6)} \end{align*} Notice that $\Theta_{n} = \Theta_{n-1}$ when- $a_n = a_{n-1}$ because the second term in Eq (6) becomes zero.

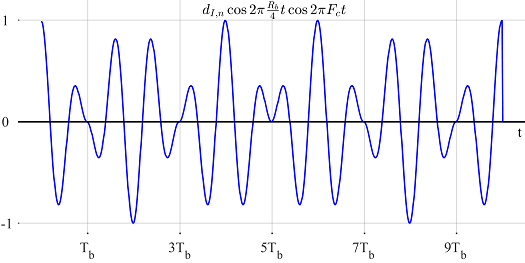

- $n$ is even because the second term in Eq (6) is a multiple of $2\pi$ (remember that $a_{n-1}-a_n$ is $\pm 2$ when not zero).

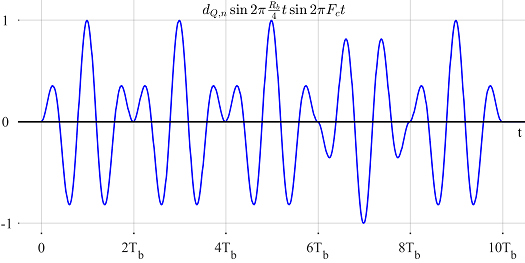

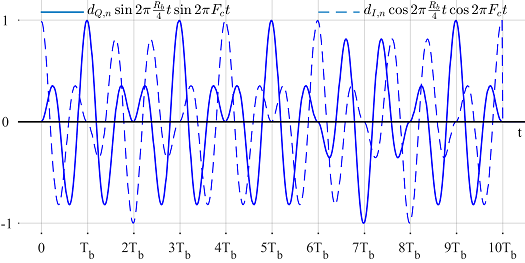

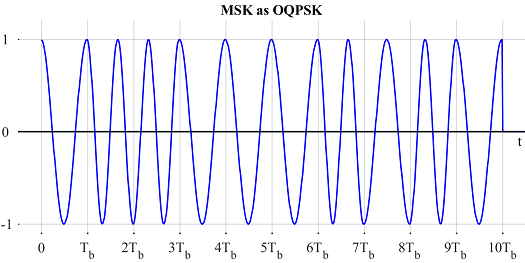

\Theta_{n-1}\pm \pi & a_n \neq a_{n-1}, n~\text{odd} \\ \Theta_{n-1} & \text{otherwise} \end{cases}\quad ---- \quad \text{Eq (7)}$$ Next, we use the following identities \begin{align*} \cos (\alpha\pm \beta) &= \cos \alpha\cos \beta \mp \sin \alpha \sin \beta \\ \sin (\alpha\pm \beta) &= \sin \alpha\cos \beta \pm \cos \alpha \sin \beta \\ \cos (-\alpha) &= \cos \alpha \\ \sin (-\alpha) &= - \sin \alpha \end{align*} to open Eq (5). \begin{align*} s(t) &= \cos \Big[2\pi F_c t + 2\pi \frac{a_n R_b}{4} t + \Theta_n \Big] \\ &= \cos 2\pi F_c t \cdot \underbrace{\cos \Big(2\pi \frac{a_nR_b}{4}t + \Theta_n\Big)}_{\text{Term 1}} - \\ &\qquad \qquad \qquad \sin 2\pi F_c t\cdot \underbrace{\sin \Big(2\pi \frac{a_nR_b}{4}t + \Theta_n\Big)}_{\text{Term 2}} \end{align*} Now we process both terms one by one. \begin{align*} \text{Term 1} &= \cos \Big(2\pi \frac{a_nR_b}{4}t + \Theta_n \Big) \\ &= \cos \Big(2\pi \frac{a_nR_b}{4}t\Big) \cos \Theta_n - \sin \Big(2\pi \frac{a_nR_b}{4}t\Big) \sin \Theta_n \\ &= \cos \Big( 2\pi \frac{a_nR_b}{4}t\Big) \cos \Theta_n = d_{I,n} \cos 2\pi \frac{R_b}{4}t\\ \end{align*} because $\Theta_0 = 0$ implies $\sin \Theta_n = 0$, see Eq (7). Furthermore, $a_n ~\epsilon~\{-1,+1\}$ and $\cos (\pm \alpha) = \cos \alpha$. We have also defined $$ d_{I,n} = \cos \Theta_n$$ Similarly, Term 2 can be expanded as \begin{align*} \text{Term 2} &= \sin \Big(2\pi \frac{a_nR_b}{4}t + \Theta_n \Big) \\ &= \sin \Big(2\pi \frac{a_nR_b}{4}t \Big) \cos \Theta_n + \cos\Big(2\pi \frac{a_nR_b}{4}t\Big) \sin \Theta_n \\ &= \cos \Theta_n \cdot a_n \sin 2\pi \frac{R_b}{4}t = d_{Q,n} \sin 2\pi \frac{R_b}{4}t \end{align*} since $\sin \Theta_n = 0$ as before and $\sin (-\alpha) = - \sin (\alpha)$. Here, we have defined $d_{Q,n}$ as $$d_{Q,n} = a_n \cdot \cos \Theta_n = a_n \cdot d_{I,n}$$ Finally, plugging both Term 1 and 2 into $s(t)$ for the $n$-th bit period, $$s(t) = d_{I,n} \cos 2\pi \frac{R_b}{4}t \cdot \cos 2\pi F_c t - d_{Q,n} \sin 2\pi \frac{R_b}{4}t \cdot \sin 2\pi F_c t$$ Using the identity $\cos \alpha = \sin (\alpha + \pi/2)$, the above equation can be revised as \begin{align*} s(t) &= d_{I,n} \sin \Big(2\pi \frac{R_b}{4}t + \frac{\pi}{2} \Big) \cdot \cos 2\pi F_c t - d_{Q,n} \sin 2\pi \frac{R_b}{4}t \cdot \sin 2\pi F_c t \\ &= d_{I,n} \sin \Big(2\pi \frac{R_b}{4}(t+T_b)\Big) \cdot \cos 2\pi F_c t - \\&\qquad \qquad \qquad d_{Q,n} \sin 2\pi \frac{R_b}{4}t \cdot \sin 2\pi F_c t\quad ---- \quad \text{Eq (8)}\\ =& d_{I,n} p(t+\frac{1}{2}2T_b) \cdot \cos 2\pi F_c t - d_{Q,n} p(t) \cdot \sin 2\pi F_c t \end{align*} where $p(t)$ is a half-sinusoidal pulse shape of period $4T_b$.

$$p(t) = \sin \Big( 2\pi\frac{R_b}{4}t \Big)$$

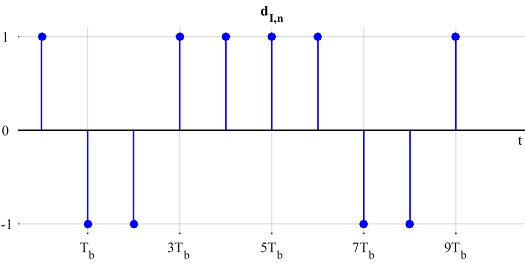

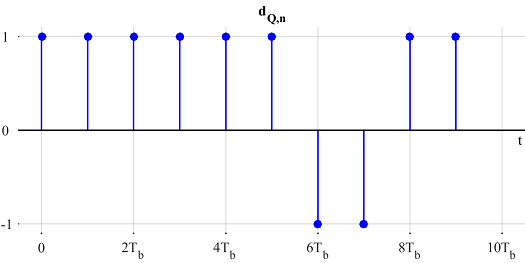

The above expression resembles an OQPSK waveform for bit $n$ if the bit rate for $d_{I,n}$ and $d_{Q,n}$ is $R_b/2$ or bit period is $2T_b$, since the time offset in the $\sin$ term must be half the bit period for OQPSK. So we have to check if $d_{I,n}$ and $d_{Q,n}$ change values every other symbol.

From the definition, $d_{I,n} = \cos \Theta_n$ and Eq (7) tells that $\Theta_n$ can only change values for odd $n$. Hence, $d_{I,n}$ is indeed an $R_b/2$ rate stream.

On the other hand, $d_{Q,n}=a_n \cdot d_{I,n}$. Again, Eq (7) says that $d_{I,n}$ can only change when $a_n$ changes but that means that $a_n \cdot d_{I,n}$ stays the same. Therefore, $d_{Q,n}$ can only change for even $n$ and when $a_n$ changes values. Consequently, $d_{Q,n}$ is also an $R_b/2$ rate stream.

Since $d_{I,n}$ changes values for odd $n$ while $d_{Q,n}$ does the same for even $n$, $d_{Q,n}$ is offset with respect to $d_{I,n}$ by $T_b$ seconds, the same amount as $\cos 2\pi \frac{R_b}{4}t$ in Eq (8). Summing up everything so far, MSK can indeed be represented as an OQPSK waveform. The data rate is the same as in CP-BFSK format since two bits are being transmitted in two bit periods here as well.

This representation is illustrated in Figure 6.

Figure 6: MSK as Offset QPSK

Compare Figure 6 with Figure 4. I did not choose separate blue and red colors in this figure so as not to confuse $d_n$s with $a_n$s (that can raise a misunderstanding that $d_{I,n}$ is odd $a_n$s and $d_{Q,n}$ is even $a_n$, which is not correct).

Observe from Figure 6 that $d_{I,n}$ is changing values every two $T_b$s at odd multiples of $T_b$, while $d_{Q,n}$ is changing values every two $T_b$s at even multiples of $T_b$. Due to this offset behavior, at every $T_b$, either $I$ or $Q$ waveform is zero at $t=T_b$ while the other reaches its maximum value. This is how phase remains continuous during symbol transitions.

Figure 7 draws $\Theta_n$ as a supplement to above findings.

Figure 7: $\Theta_n$ evolving with time

On a final note, observe from some equations (e.g., (2) and (5)) that the continuous phase has two parts, one of which arises due to the delay of the $n$-th symbol. This information can be used to refine a phase estimate.

We are also clear now why MSK can act both like a linear and a non-linear modulation. In reality, MSK is a non-linear modulation scheme (see Eq(2)) for $a_n$. Pseudo-symbols $d_n$ themselves are non-linear functions of information bits. So it is only from $d_n$ viewpoint that MSK can be seen as a linear modulation scheme.

A Generalization: From MSK to Continuous-Phase FSK (CP-FSK)

After understanding MSK, it can be expanded into a general modulation scheme known as Continuous-Phase Frequency Shift Keying (CP-FSK). As we see in the next section, CP-FSK is a special case of Continuous-Phase Modulation (CPM), which is a class of non-linear digital modulation schemes in which the phase of the signal is constrained to be continuous from one symbol to the next. As with MSK, the most attractive feature of such a signal is that it has a constant envelope as a result of the amplitude being independent of the modulating information. Consequently, a CPM signal can be amplified without distortion by a non-linear amplifier operating near the saturation point allowing low cost and more efficiency as compared to a linear amplifier.

Usually CP-FSK (and hence CPM) is not a straightforward concept to master. However, starting from MSK, its basics can easily be understood by building on the same expressions. For this purpose,

- Remember that $\Delta_F = R_b/4$.

- Modulation symbols can carry more than 1 information bit. For example, 00, 01, 11 and 10 can be sent through four symbols $a_n$ $\epsilon$ $\{-3,-1,+1+3\}$. In general, $a_n$ is a sequence from the alphabet $\{\pm 1, \pm 3, \cdots,\pm (M-1)\}$. Thus, we call $a_n$ as symbols and replace $T_b$ with $T_M$ (a symbol time) from here onwards. Symbol rate $R_M$ is then $1/T_M$.

Now let us start with rewriting Eq (2) and Eq (4) and then substituting the former into the latter.

$$\theta_n = \frac{\pi}{2} \sum \limits_{i=0}^{n-1} a_i$$ \begin{align*} s(t) &= A \cos \Big[2\pi F_c t + 2\pi\frac{a_n R_M}{4} (t-nT_M) + \theta_n\Big] \\ &=A \cos \Big[2\pi F_c t + 2\pi \frac{1}{2}\cdot \frac{t-nT_M}{2T_M}a_n + \frac{\pi}{2} \sum \limits_{i=0}^{n-1} a_i\Big] \\ &= A \cos \Big[2\pi F_c t + 2\pi h a_n \cdot q(t-nT_M) + \pi h \sum \limits_{i=0}^{n-1} a_i\Big] \end{align*} where we have defined the following terms. \begin{align*} h &= \frac{1}{2} \\ q(t) &= \begin{cases} 0 & t \le 0 \\ \frac{t}{2T_M} & 0 \le t \le T_M \\ \frac{1}{2} & t \ge T_M \end{cases} \end{align*} It turns out that $h$ is a general concept known as modulation index, which in case of MSK is equal to $1/2$. In general, $h$ is defined as $$h = 2 \frac{\Delta_F}{R_M}$$ which describes the peak frequency deviation in terms of a percentage of the symbol rate. With the definition of $h$, we can write $$s(t) = A \cos \Big[2\pi F_c t + 2\pi h \Big( a_n q(t-nT_M)+ \frac{1}{2} \sum \limits_{i=0}^{n-1} a_i\Big)\Big]$$ The above equation is true for the interval $nT_M \le t \le (n+1)T_M$. Considering from its definition that $q(t)$ is $1/2$ after a symbol interval, we can also write $s(t)$ as \begin{align*}s(t) &= A \cos \Big[2\pi F_c t + 2\pi h \sum \limits _{i=0}^{n} a_i q(t - iT_M) \Big]\quad ---- \quad \text{Eq (9)}\\ &= A \cos \Big[2\pi F_c t + 2\pi h \int _{-\infty}^{t} \Big( \sum \limits _{i=0}^n a_i g(u - iT_M)\Big) du \Big] \end{align*} where $g(t)$ is defined as the derivative of $q(t)$ as $$q(t) = \int \limits _{0}^{t} g(u) du$$ Notice that in the present case with $q(t)$ defined as above, its derivative $g(t)$ is a rectangular pulse shape. Consequently, $\sum \limits _i a_i g(u - iT_M)$ is the standard baseband Pulse Amplitude Modulated (PAM) waveform with rectangular pulse shape and this is how its discontinuities are transformed into a continuous-phase signal.It is refreshing to conclude that the starting point for a continuous-phase modulated signal is a standard pulse amplitude waveform, in which the discontinuities are smoothed out by the integral operation.

More Generalization: From Continuous-Phase FSK (CP-FSK) to Continuous-Phase Modulation (CPM)

Now referring to Eq (9), the expression for a CPM signal can be written as

$$s(t) = A \cos \Big[2\pi F_c t + 2\pi \sum \limits _{i=0}^{n} h_i \cdot a_i q(t - iT_M) \Big]\qquad nT_M \le t \le (n+1)T_M$$Here, $h_i$ is a sequence of modulation indices: a ratio of two relatively prime integers.

$$h = \frac{k}{p}$$

This is the case of multi-h CPM. When all $h_i=h$, the modulation index is the same for all symbols. This is the category we saw in CP-FSK and MSK above.

Finally, $q(t)$ is a waveform shape known as the phase response of the modulator which is normalized as

$$q(t) = \begin{cases}

0 & t \le 0 \\ \frac{t}{2T_M} & 0 \le t \le LT_M \\

\frac{1}{2} & t \ge LT_M

\end{cases}$$

where $L$ is the length of a pulse $g(t)$ in symbols. Since angular frequency is the rate of change of phase, this $g(t)$ is the derivative of $q(t)$ and known as the frequency response of the modulator.

$$g(t) = \frac{dq(t)}{dt}$$

For any pulse shape $g(t)$, $L=1$ results in full response CPM, while the other case $L>1$ is the partial response CPM. Commonly used pulse shapes are rectangular (as used in the case of MSK), raised cosine and Gaussian.

We can see that CPM is in fact a very large class of modulation schemes owing to different pulse shapes $g(t)$, modulation indices $h_i$ and the modulation alphabet size $M$. That is both a blessing and a curse: blessing due to the remarkable variety of signals as its offsprings all yielding excellent spectral and power efficiencies, and curse due to the high receiver complexity. By virtue of Moore's law, this is becoming less of an issue -- thanks to powerful baseband processors in the modern age.

References

[1] Mengali and D'Andrea, Synchronization Techniques for Digital Receivers, 1997.

[2] John Proakis, Digital Communications, 4th ed, 2001.

- Comments

- Write a Comment Select to add a comment

In the "A Generalization: From MSK to Continuous-Phase FSK (CP-FSK)" section, you discuss going from a binary system to an M-ary system where a_n can be {+/-1,+/-3,...,+/-(M-1)} which reflects the number of modulation symbols and information bits for each mod. symbol. From this, I have two questions:

1. What kind of input would go through this system? I know for CP-BFSK (in the previous section) one could input a string of binary values (i.e. 0110010111001) and the output would be a waveform combining two sinusoids of the respective frequencies of the 0 bit and 1 bit (like Figure 4). In the example where you state the modulation symbols 00, 01, 10 and 11 would have a_n values of (-3,-1,1,3) but would you input something like "01 10 11 00 11 10 01" and output a waveform of the respective sinusoids?

2. My main question is in a M-ary system breater than M=2 (binary) would the number of carrier frequencies increase? In the first explanation of BFSK, bits 0 and 1 had frequencies F0 and F1. Therefore, in the example above, would bits 00, 01, 10 and 11 have frequencies of F0, F1, F2, and F3?

Thanks!

Your question 2 contains the answer to your question 1. Yes, the input would be groups of two bits each and the output would be 1 out of 4 possible frequencies. Remember, CP-FSK is also FSK, just with the continuous phase. The values (-3,-1,1,3) determine how far the modulated frequency would be.

Thanks for the response! My only follow-up question is this: how would the four frequencies F1, F2, F3 and F4 affect the calculation of Delta F and Fc?

In the binary case, Delta F = (F1 - F0)/2 and Fc = (F0 + F1)/2. How would this change with four frequencies given?

Thanks again!

DeltaF remains the same, whether you compute the difference between frequencies 0 and 1, 1 and 2, or 2 and 3, etc. Carrier frequency then can be between carriers 1 and 2.

Thank you , it was really well explained.

I have a question, I know MSK is used in typical IEEE 802.15.4 tranceivers (such as CC2420). These transceivers usually feature a carrier offset compensation module, to account for differences between independent carriers at the transmitter and receiver side.

Is, in these transceivers, MSK coherently detected? Could it also be non-coherently detected, such as FSK?

Standard BLE transceivers (which use GFSK) from TI, Nordic, etc. can be categorized as coherent receivers or non-coherent? Where is the boundary? As they usually have some frequency compensation system but, at the same time, do not account for accurate phase recovery an complete carrier stimation.

I find it difficult, since most transceivers do not even specify a block diagram. Do you know which is the usual way to go in BLE (coherent or non-coherent)?

Thank you!

Glad you liked it. There are many different ways to detect an MSK signal. How it is implemented in IEEE 802.15.4 transceivers is anybody's guess because companies closely guard their implementations as trade secrets. You will find many publications on such aspects from the industry side in 1970s and 1980s but with more money entering the wireless market, this trend is going down (I think). Coming back to your question, if the objective is superior performance, MSK should be coherently detected like OQPSK (or sequence estimation). If the objective is low power, it can be done through a non-coherent detection scheme for FSK. There are other factors involved as well. For example, if the chip has a baseband setup for some other linear modulation scheme, then it can use the same hardware/software for MSK as well.

Thank you!

Most low-cost/low-power IoT receivers are non-coherent, at least in the analog shifting to IF/baseband, but they feature some coherent properties, like frequency/phase error compensation (normally estimated during the packet preamble).

This estimation is then used in the digital DSP to aid the demodulation process, so while the analog part is non-coherent, the digital part accounts for mismatches between the local carriers.

Do you agree that the boundary between coherent/non-coherent is more diffuse?

This shift is decades old now. Whether analog or digital, coherence implies acquiring the carrier regardless of the methodology. I don't see any confusion in the boundary between coherence and non-coherence.

Hello! Is there any chance that you have source code of a MATLAB program that produces the MSK as QPSK waveforms? I've been trying to recreate the data that you've provided in your examples but keep running into a few issues. Any help would be greatly appreciated!

Thank you for an excellent article! It provides a high-level view of a non-trivial subject, and at the same time features compactness, rigor and intelligibility. Definitely, you don't have to choose any two of these characteristics: you manage to blend all three!

There is a slightly misleading sentence in section :"MSK as Continuous-Phase FSK", clause 2:

Observe in the above equation that the phase continuity is not necessarily maintained from one symbol to the next.

Actually that equation (BFSK with minimum spacing) guarantees the phase discontinuity: each symbol period starts with zero phase offset, and finishes with +/-pi/2.

Thanks! It's very very helpful for me to understand. Perfect tutorials!

Thank you for a very detailed tutorial. A quick question regarding figures 4 and 5; the tutorial states "Below, we plot θnθn as a function of time in Figure 5 to see how it evolves. One can observe that it indeed changes values in steps of π/2π/2 depending on the last data bit.". If Figure 4 is the carrier wave it is difficult to visualize the pi/2 phase transitions of Figure 5. Perhaos I misinterpret the meaning of the two figures.

Good question. $\theta_n$ is indeed the phase that is required to make the waveform phase continuous at the boundaries.

Thanks for your tutorial.

what is the T in the modulation phase response?

It is actually $T_M$, the symbol time. I updated it. Thanks for pointing that out.

Dear author,

First of all, thank you for your detailed article. It really helped me a lot. As I was trying to implement the OQPSK to MSK symbol mapping (d_{I, n}, d_{Q,n} to a_n), I noticed that its actually:

a_n = (-1)^(n-1) * d_{Q,n} / d_{I,n}

Should there be such a term in your derivation? I can't figure it out.

Thank you

Sincerely,

Hi,

Thanks for your kind words. My derivation actually goes from MSK to OQPSK. I could include a section from OQPSK to MSK symbol mapping but that will probably make the article longer.

To post reply to a comment, click on the 'reply' button attached to each comment. To post a new comment (not a reply to a comment) check out the 'Write a Comment' tab at the top of the comments.

Please login (on the right) if you already have an account on this platform.

Otherwise, please use this form to register (free) an join one of the largest online community for Electrical/Embedded/DSP/FPGA/ML engineers: