Spline interpolation

A cookbook recipe for segmented y=f(x) 3rd-order polynomial interpolation based on arbitrary input data.

Includes Octave/Matlab design script and Verilog implementation example.

Keywords: Spline, interpolation, function modeling, fixed point approximation, data fitting, Matlab, RTL, Verilog

Introduction

Splines describe a smooth function with a small number of parameters.

They are well-known for example from vector drawing programs, or to define a "natural" movement path through given points in computer animation.

They are an engineer's tool, apparently originating from the shipbuilding industry, with widespread use documented from world war II to make "backup copies" of aircraft design templates that did not fit into a bomb shelter.

Splines are convenient to model arbitrary continuous functions, as fixed-point implementation is efficient and straightforward. In DSP applications, a spline's continuous first derivative helps to confine interpolation error close to frequencies of the signal, which is often desirable (i.e. to limit audio aliasing to higher frequencies where the ear is more sensitive). For example, Catmull-Rom splines are commonly used for low-cost audio resampling.

This article provides a recipe to construct spline coefficients from arbitrary scattered points via a least-squares solver.

For practical design problems this allows a fluid "cooking-style" design process, where the result is shaped by adding (or removing) input data to control the approximation error.

Figure 1: Spline approximation of a function, possibly from noisy data

The splines discussed in this article consist of multiple 3rd-order polynomial sections.

Transitions between segments are continuous both in value and in the first derivative, resulting in a function that is for most practical purposes fairly "smooth".

Here, continuity in the second derivative is not enforced. This results in a more accurate function approximation, at the expense of "smoothness".

Examples

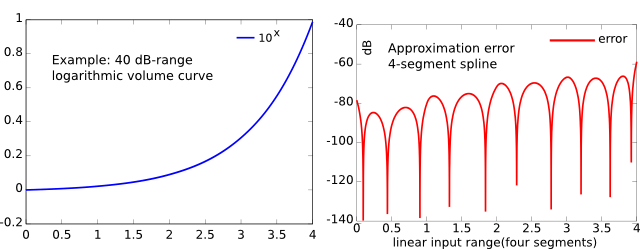

The example in figure 2 is a logarithmic volume control curve from a DIY FPGA project. Using four segments, the spline approximation is virtually indistinguishable from the original exponential function. The approximation error is well below 1 percent (-40 dB).

Figure 2: A four-segment spline modeling a 40 dB-range volume curve

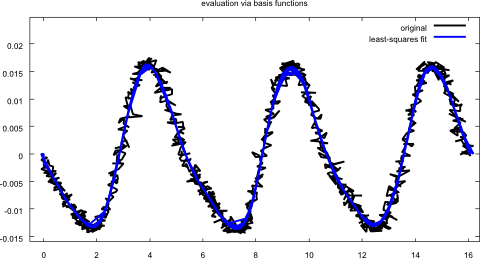

A second example (figure 3) shows a spline fit to noisy and non-gridded data.

It originates from the same project, where the target waveform represents three cycles of a Hammond organ drawbar signal, with noise added both to sampled values (y axis) and sampling time instants (x axis).

As can be seen, the design process is robust towards irregularities in the data.

Figure 3: 16-segment spline through a very noisy Hammond organd drawbar sample

The math

For segmentation, the input variable is split into an integer part and a fractional part (figure 4).

Figure 2: A four-segment spline modeling a 40 dB-range volume curve

For a number of segments that is a power of two, the separation is done by taking the higher- and lower bits.

The Verilog implementation below shows the details for signed numbers.

Each of the segments is described by a polynomial y(x) = a0+a1x+a2x2+a3x3 with x in a domain interval -1..1 covering the segment (see "polynomial variable" in figure 4).

Note that a segment drawn as k..k+1 appears in the polynomial evaluation with twice the range [-1..1].

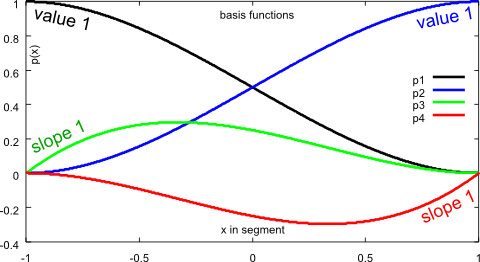

Within a segment, four basis functions p1.. p4 are defined that have zero value and zero slope at x = +/-1, with the exception of

- p1 has value 1 at x = -1

- p2 has value 1 at x = 1

- p3 has slope 1 at x = -1

- p4 has slope 1 at x = -1

Figure 5: Basis functions

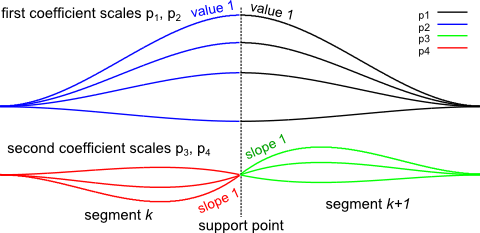

For each support point, a first scaling factor is assigned to a pair of basis functions p1, p2 on the left and right side of the support point, respectively: The scaling factor controls the value of the spline at the support point, but does not affect the slope (because the slope of both p1, p2 is zero at the support point). This is shown in the upper half of Figure 6.

In a similar manner, a second scaling factor is assigned to p3, p4 to set the slope, without affecting the value at the support point (lower half of Figure 6).

Figure 6: Scaled basis functions enforcing continuity through a support point

The scaling factors are then found by minimizing the difference between input data and approximation in a least-squares problem.

Finally, the polynomial coefficients for each spline segment are determined by summing up all four scaled basis function polynomials.

The derivation for the basis functions in Maxima CAS can be found here.

The discussed approach is slightly different, as it uses the slope as an explicit parameter.

Number of support points

To span n segments, n+1 support points are needed.

Relevant for all practical purposes is the number of segments, not support points, as each segment defines one set of polynomial coefficients.

For example, a fixed-point implementation may use four MSBs to select one of 16 segments. The equation system in the design process will use 17 support points.

In the provided Matlab program, the outermost support points do not need any special consideration with regard to continuity: In an interval without input data, any basis function becomes invisible to the least-squares cost function.

Octave (Matlab) script

All the little details are taken care of in the Octave / Matlab program, which can be downloaded here.

Several examples to generate data are included. A spline is generated, evaluated and plotted.

Finally, fixed-point coefficients are written to a file that is imported by the Verilog RTL implementation.

RTL implementation

This Verilog implementation demonstrates the evaluation of the spline in RTL. It was written with the 18-bit multipliers on Spartan-6 FPGAs in mind.

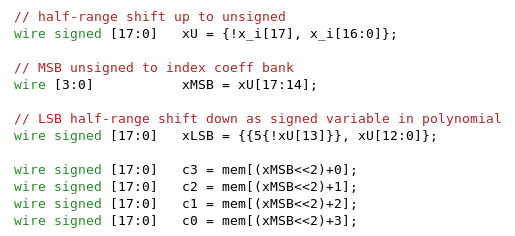

Input variable

The signed input is first converted to offset binary by inverting the sign bit.

The four MSBs become the index into the coefficient table. The LSBs are then converted back in the same manner from offset binary to two's complement signed.

Figure 7: Fixed-point handling of input variable

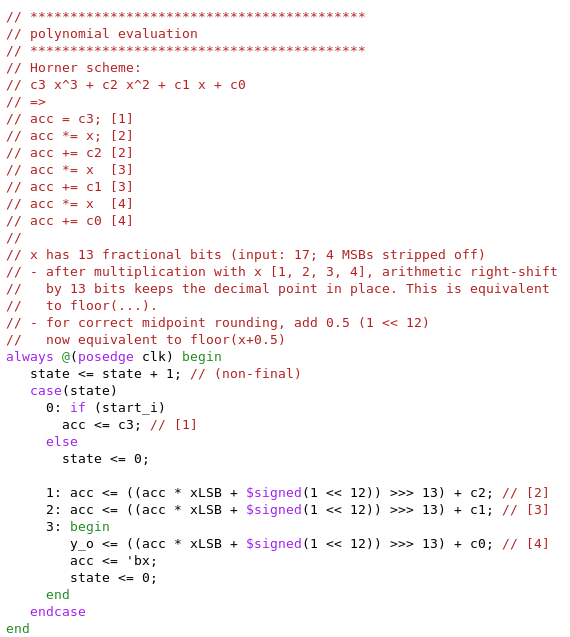

Multiply-accumulate

With four MSBs stripped off, the LSB part of the input variable contains 13 fractional bits. After multiplication with the LSB part, arithmetic right shift by 13 bits restores the decimal point. Prior to that, 0.5 LSB is added for correct midpoint rounding.

In some cases (i.e. only two segments) it may be insufficient and cause internal overflow in the MAC.

If this happens, a straightforward solution is to divide all coefficients by 2 at design time, then simply double the result.

Figure 8: Horner scheme evaluation

In the Verilog code, the four operations of the Horner scheme evaluation are written out explicitly for clarity.

Alternatively, a MAC could be used, with the feedback path opened during the first cycle to load c3 (i.e. "CLR" input).

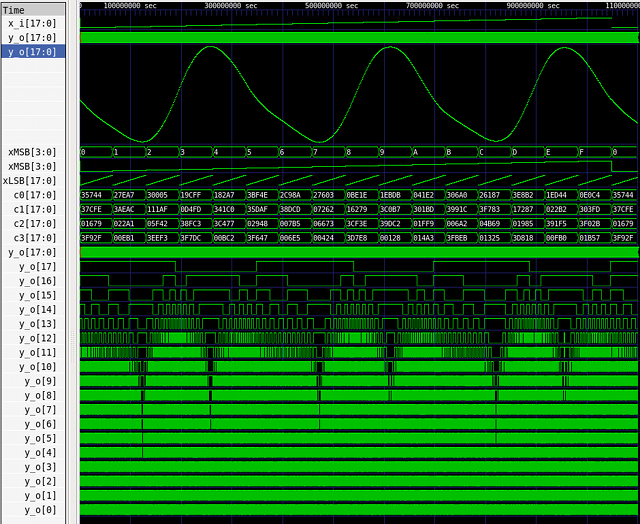

Simulation result

The simulation snapshot in figure 9 covers the whole 18-bit input range of input values.

The segment structure is clearly visible in MSB, LSB and the selected coefficients (see figure 7), and the output signal looks as expected.

Figure 9: Simulation result for "Hammond drawbar" spline

Pipelined RTL implementation

A second RTL version ( download) is a pipelined implementation of the four-segment volume curve example from Figure 2. The evaluation of four independent input variable values is interleaved.

The pipelining generally allows higher clock frequencies by introducing additional delay.

Numerical accuracy

The implementations are not bit-exact because of intermediate rounding after every multiplication. A typical error is one LSB in the output.

Conclusion

This article presents a design method to construct an interpolation function in fixed-point RTL from arbitrary data.

A spline is derived via a least-squares fit. The spline is then evaluated using the Horner scheme.

This work was originally done for an open-source FPGA synth project. If it gets reused (amplifier model, anybody?), I'd be happy to hear about it.

Download

The whole project archive, including the links from the text, can be downloaded here.

- Comments

- Write a Comment Select to add a comment

Thanks for great article. it's been a long time for looking like this subject. and finally I found it.

Currently I'm trying to implement Polynomial equation 5-th order not 3-th order like your article. Could we extend it from 3-th to 5-th?

and I found something go wrong m file on Matlab.

>> splines_140511

Warning: Imaginary parts of complex X and/or Y arguments ignored

> In splines_140511 (line 245)

Error using assert

The condition input argument must be a scalar logical.

Error in splines_140511 (line 259)

f = fopen('fixedPoint.txt', 'w'); assert(f);

Could you check please?

for a least-squares approach as in the Matlab script, it usually boils down to a multiply-accumulate operation between the input data and a (precalculated) row of the matrix inverse. There are some variants (i.e. Lagrange or Catmull-Rom) where the coefficients are polynomials of the support points. It depends on the problem.

Generally, I'd try to avoid doing anything in RTL if it's not performance critical. It sounds more like a job for a soft-core CPU.

Is there a way to calculate the output frequency?

At the moment, I'm simulating the lowest increment in ModelSim (increase Input by one with every clock @ 100MHz) and calculating the frequency of a whole sine wave by marking start and end. There has to be a relationship between component-clock, step-width on the input and the time, the component needs to calculate the new data. I'm using the pipelined version though. Can anyone give some advise?

Hi,

my testbench as it stands is not exactly straightforward in the resulting frequency. Loosely speaking it's the execution time of the FSM (16 cycles) plus a little extra (10 cycles). But I wouldn't bother reverse-engineering that, rather write it in a way that drives a predetermined frequency.

For example, trigger a phase increment and spline evaluation (code: "simulation only, see above: ... increase variables") every 32 clock cycles. Each increase is a delta of four in an 18 bit cycle (262144). This results in a full sine period every 16384 evaluations. With 32 clock cycles per evaluation, one sine period would be 524288 clock cycles and at 100 MHz this corresponds to a sine wave frequency of 100e6 / 524288 = 190.73 Hz.

All that said: The given state machine implementation is not the most straightforward (I needed *lots* of independent waves). You can find an easier-to-use implementation of the same idea here:

This uses four multipliers and creates one output sample per input sample (with some fixed latency). I'd start with that one, if FPGA resources are not an issue.

Thanks for your help, the ramp in front of the spline was the problem.

To get down to somehow mHz-Accuracy I need to increase the input width of the spline to 52 bits, at least with 100MHz. Maybe I'll increment every other clock cycle to lower this again, otherwise the design will eat my fpga :D

For the counter implementation, you could have a look at the divider (=counter) in PLL architectures (e.g. "integer", "dual modulus"). This is fairly straightforward on an FPGA.

If timing becomes an issue, it's also possible to mux between multiple e.g. 4 counter instances with staggered starting values that increase in steps of 4 every 4 clock cycles. Write both the counter update and the output so that it is allowed 4 clock cycles instead of 1, and enable the tool's register rebalancing.

To post reply to a comment, click on the 'reply' button attached to each comment. To post a new comment (not a reply to a comment) check out the 'Write a Comment' tab at the top of the comments.

Please login (on the right) if you already have an account on this platform.

Otherwise, please use this form to register (free) an join one of the largest online community for Electrical/Embedded/DSP/FPGA/ML engineers: