Hi,

I'm currently trying to implement the Overlap Save / Scrap method for educational purposes using KFR and C++.

However, I'm noticing that something is off when the FFT / block size is greater than the signal length. I have to admit that this is not really a real-life scenario (as you'd usually use either direct or standard FFT convolution), but I'd like to understand WHY this happens.

I realised this also happens for other choice of parameters... See below

From my understanding, Overlap Save / Scrap is always valid and should inherently provide the necessary padding, even when the signal is shorter than the FFT Size

void overlapSaveConv(

float const * data,

float* out,

float const * filter,

size_t nSamples,

size_t filterLength,

size_t FFTSize

) {

if(FFTSize < (filterLength + 1)) throw std::invalid_argument("FFT Size must be at least one sample larger than filter length!");

//N = L + M - 1

//N = L + filterLength - 1

//=> L = N - M + 1

const size_t N = FFTSize;

const size_t M = filterLength;

const size_t step_size = N - (M - 1);

//We need to zero pad the filter

std::vector<float> paddedFilter; paddedFilter.resize(FFTSize);

std::memcpy(paddedFilter.data(), filter, sizeof(float) * filterLength);

//Compute Fourier Transform of filter, that will be used a lot of times in the loop

auto H = kfr::realdft(kfr::make_univector(paddedFilter));

//As far as I understand, it is not (easily) possible to implement overlap save in place

//This is due to the fact that we prepend M-1 / overlap zeros and then go stepsize further

//However, if we go stepsize further, the beginning of the data we need has already been overwritten by the previous block

//results (due to the offset from the zeros)

//Two possibilities:

// - Copy the input array

// - Separate buffer for the output array

//As copying the input array allows us to get rid of logic inside the loop

//(recognise whether we need to prepend or append zeros), this is our choice.

//We need to prepend and append zeros to our data to account for transients (prepend) and the block size (append)

std::vector<float> paddedData;

paddedData.resize(

M - 1 + //Prepend M-1 (filterLength - 1) zeros at the beginning

nSamples + //Space for the actual samples

FFTSize //Append zeros at the end so we don't need to worry about in the loop

);

std::memcpy(paddedData.data() + (M - 1), data, sizeof(float)*nSamples);

size_t i = 0;

while(i < nSamples) {

//Gather the data of our current block and perform an FFT

auto xSamplesUnivector = kfr::make_univector(paddedData.data() + i, FFTSize);

auto fftx = kfr::realdft(xSamplesUnivector);

//Apply the filter to our current block

fftx = fftx * H;

//Transform back to the time domain and apply proper scaling!

auto yt = kfr::irealdft(fftx);

yt /= double(yt.size());

//Copy our result to the output

std::memcpy(

out + i,

yt.data() + (M - 1), //Discard filterLength - 1 (M - 1) samples from the beginning

std::min( //Make sure we don't write too many samples (as the last block is zero padded / has zeros appended, but the output target is shorter)

(N - (M - 1)),

nSamples - i + 1

) * sizeof(float)

);

i += step_size;

}

}

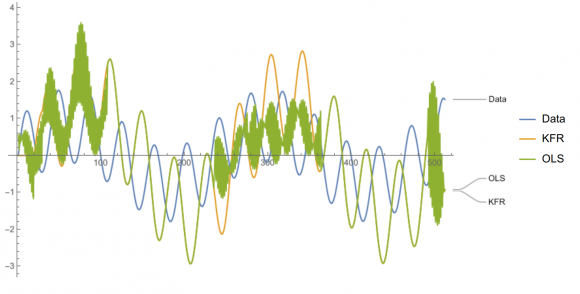

See this example (Signal Length = 2^9 = 512, chosen FFT Block Size = 1024, filter length = 150 samples (Zero everywhere except unit value at 20th and 30th sample):

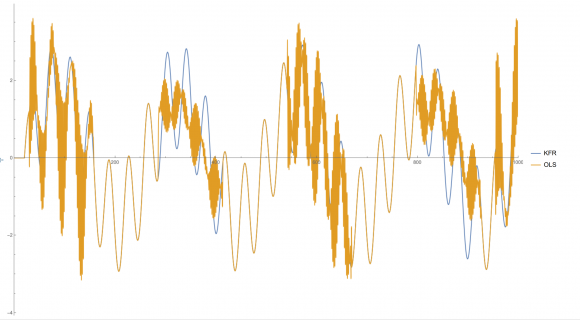

Signal Length = 2^12 (=> 4096), FFT Block Size = 1024, file length = 100 samples (Zero everywhere except unit value at 20th and 30th sample):

Update:

I have been able to partially identify the issue, it must be related to KFR.

When replacing the real dft with a standard complex dsp (minimally invasive), everything works just fine.

It seems like there is an issue when multiplying two real DFTs in KFR and transforming them back.

If you feed a complex DFT with the same data (numbers, not bit representation, of course), there are no issues...